Connect4 AI and Naive programming

Much like many others, I started coding by completing Harvard's CS50. For the final project, I decided to build a Connect4 "AI". But I wanted to do this at a low level. That meant that I did zero research on existing Connect4 theory or classical AI algorithms. In a way, I put myself on an island with nothing but a Connect4 board and had to build the "AI" without any other input. Back then, I wrote:

I wanted to create an AI that was borne of my own intelligence and my own intelligence alone. To prepare for this project, I downloaded a Connect 4 app from the App Store and played more than 100 games against their AI on the hardest setting. Not only did I have to use pattern recognition and experience to teach myself how to play the game well, but I also had to break it down in a way that my playstyle could be implemented in code--and that was the fun of it. The key problem of how to convey my own playstyle in code allowed me to produce the hierarchy system as the solution. Because I wanted it to be a challenge where the program is borne out of my own intelligence and nothing else, I purposely avoided researching strategies, tactics, and opening theory for the game. If I were to implement tactics, I would have learned the tactics on my own, simply by playing the game myself. Moreover, I had no doubt that many people have created Connect 4 AIs before, probably even for CS50. But I did not want to learn about how they did it and implemented this method completely originally. I am certain there are more elegant ways to build stronger, perfect, and completely unbeatable AIs, but my goal here was to learn and have fun, and absolutely not to make the world's strongest Connect 4 AI.

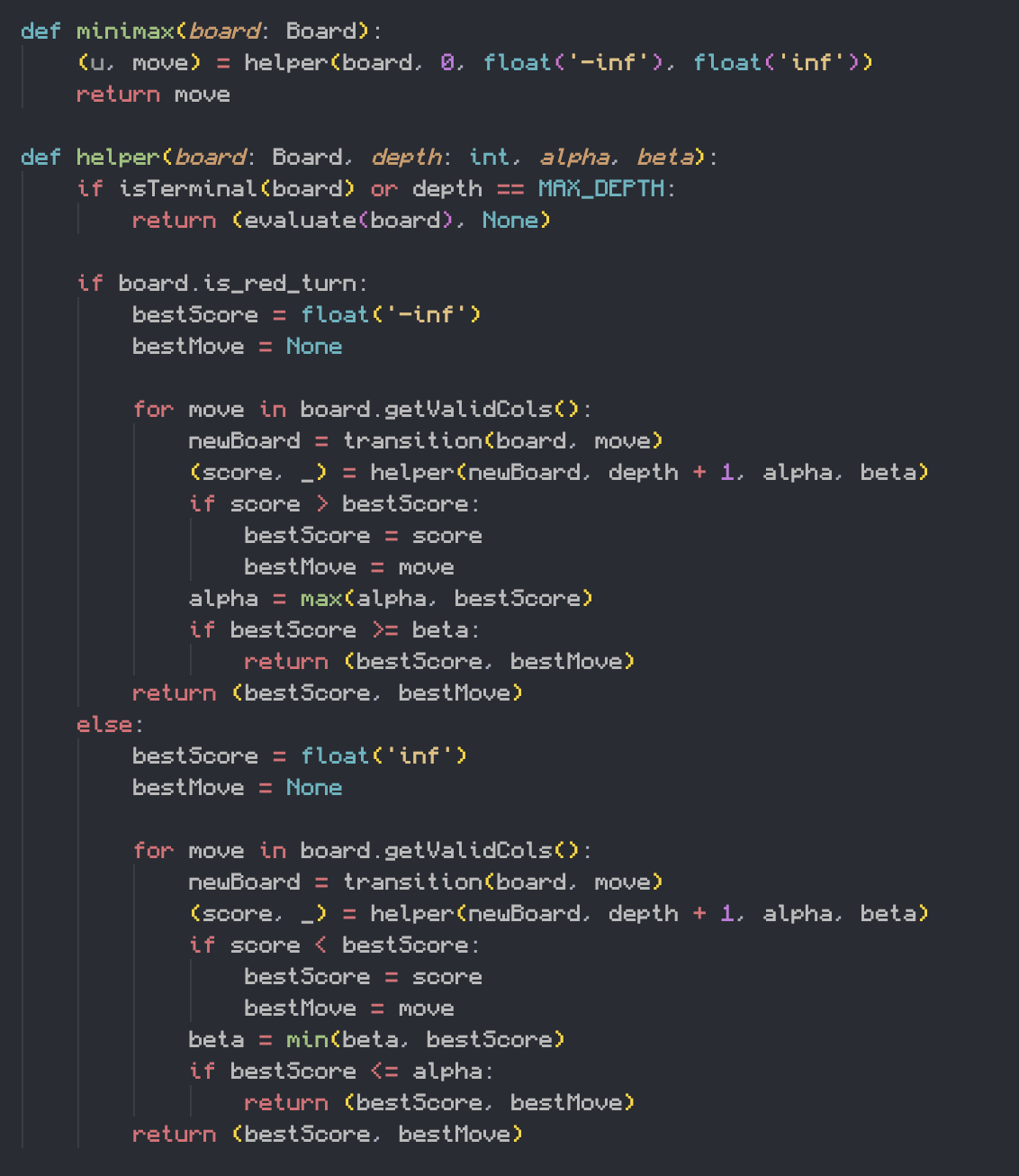

Having now learnt classical AI algorithms, I decided to come full circle and build the AI classically. No dobut even at limited depth Minimax destroys my old hardcoded algorithm. In fact I think theres some edge case where my old program has a runtime error. My point is, the code was horrible. But I enjoyed the process of thinking and formulating. It felt like "deep learning" in the literal, biological sense. Developing subconscious thoughts, intuition and opinions about anything is easy. Solidifying them into concrete words and sentences is hard. Writing them into a program was even harder. But it was satisfying.

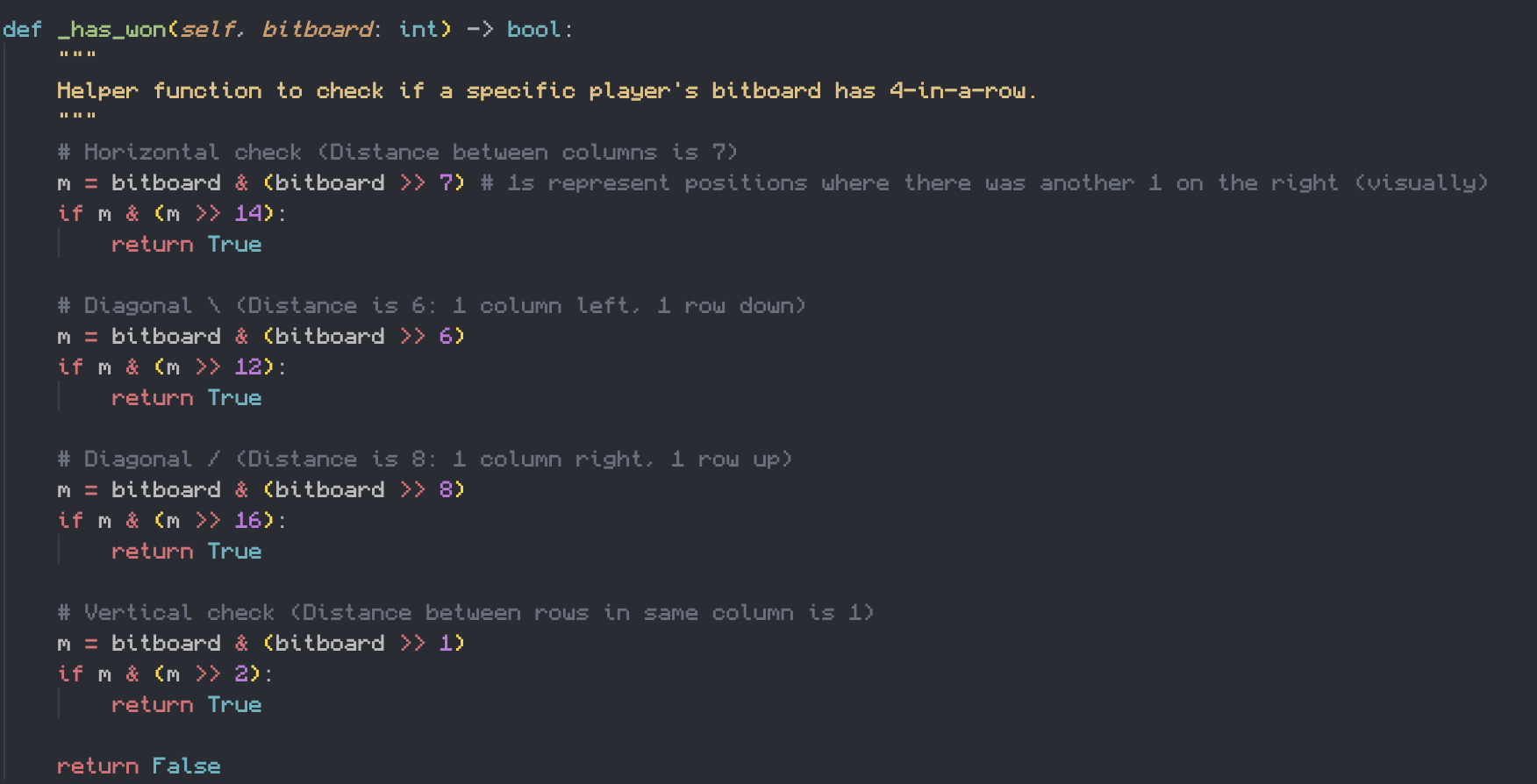

I was able to implement the classical Connect4 AI in less than half a day, using Gemini as a peer-coder. It was good practice implementing minimax, but I found learning about the bitboard representation of the game state the most satisfying. What was helpful, but also scary, is how good Gemini was at helping me write the code, and also how good it was at helping me understand it. It's hard to imagine a future where programmers are just prompt engineers.

In order for a human to not get replaced by AI, the human must capitalise on the human aspect–what AI cannot replicate. What is the human aspect of programming? Well, if anything, if you asked Gemini to build a Connect4 AI, they certainly wouldn't be able to replicate my poorly written naive algorithm. Perhaps then, the human aspect of programming is using naive human intuition to write bad code for novel problems? What are these novel problems? Where do we find them? Would a human really be able to intuit better solutions than an AI?

The good thing is that probably in the future solving problems that have already been solved will no longer be a job. What I mean by that is, for example, how to build a website is a problem that has already been solved. So AI can do it. And I like that, because solving problems that have already been solved is boring and is basically just boilerplate. The bad thing is that most people, other than researchers, are probably not used to working on unsovled problems. So am I saying that the only jobs left will be those at the frontier of knowledge, i.e. research? Maybe. But maybe there will also be AI researchers in the future.

I don't really know what point I am trying to make. Like I said, having abstract thoughts and inuitions is easy. Forming them into a concrete argument is hard. So I guess what my gut is trying to say, is that somehow, it was really important that I had built the naive algorithm, and that experience will help me some day.